GTC 2026: What NVIDIA’s Roadmap Means for AI Infrastructure in Canada

At yesterday’s NVIDIA GTC 2026 keynote, CEO Jensen Huang laid out a vision that made one thing clear: the future of computing will be built on AI infrastructure at an unprecedented scale.

For organizations deploying AI systems—whether enterprises, research institutions, hyperscalers, or sovereign AI initiatives—the scale and complexity of the required compute infrastructure is accelerating dramatically.

For Images et Technologie, an NVIDIA infrastructure partner and Canadian system integrator specializing in high-performance computing and AI infrastructure, the keynote reinforced a major shift already underway in the market:

AI infrastructure is becoming one of the most important technology platforms of the next decade.

Below are the key announcements and what they mean for organizations building AI clusters across Canada.

Watch the Full NVIDIA GTC 2026 Keynote

If you would like to see the entire keynote presentation from Jensen Huang, you can watch it here:

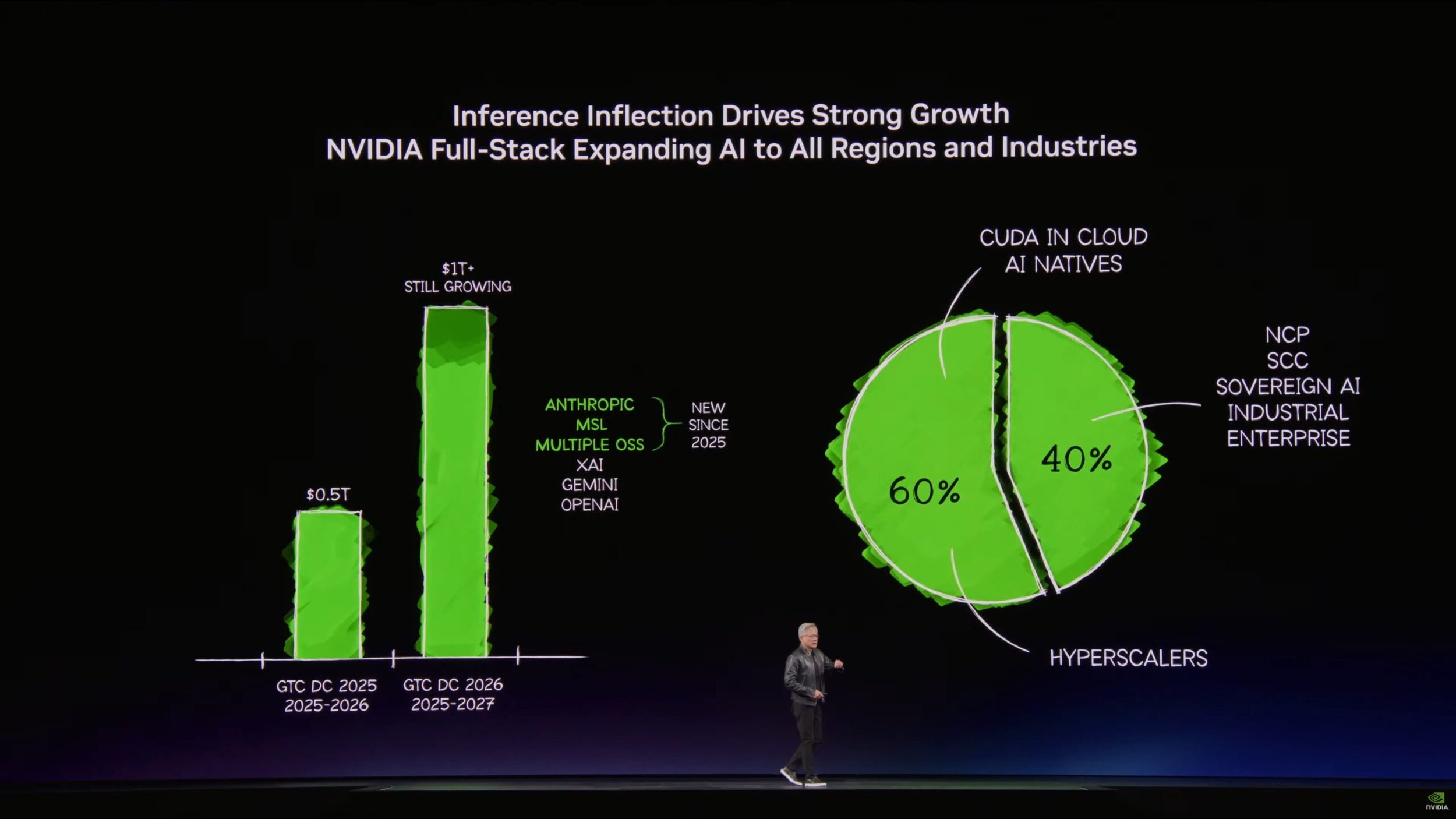

AI Compute Demand Is Exploding

One of the most striking points in the keynote was the scale of demand for AI computing.

According to Huang, AI computing demand has increased roughly one million times in the past few years, driven by generative AI, reasoning models, and emerging agentic AI systems.

But the shift is not just about training large models anymore.

The industry has now entered what NVIDIA calls the inference inflection point.

Modern AI systems continuously generate tokens as they reason, interact with tools, and execute tasks. Every one of those actions requires inference compute.

This fundamentally changes how infrastructure must be designed.

Data centers are no longer simply storing and processing data.

They are becoming AI factories producing tokens as an industrial output.

NVIDIA GTC 2026 Keynote Inference Inflection Growth

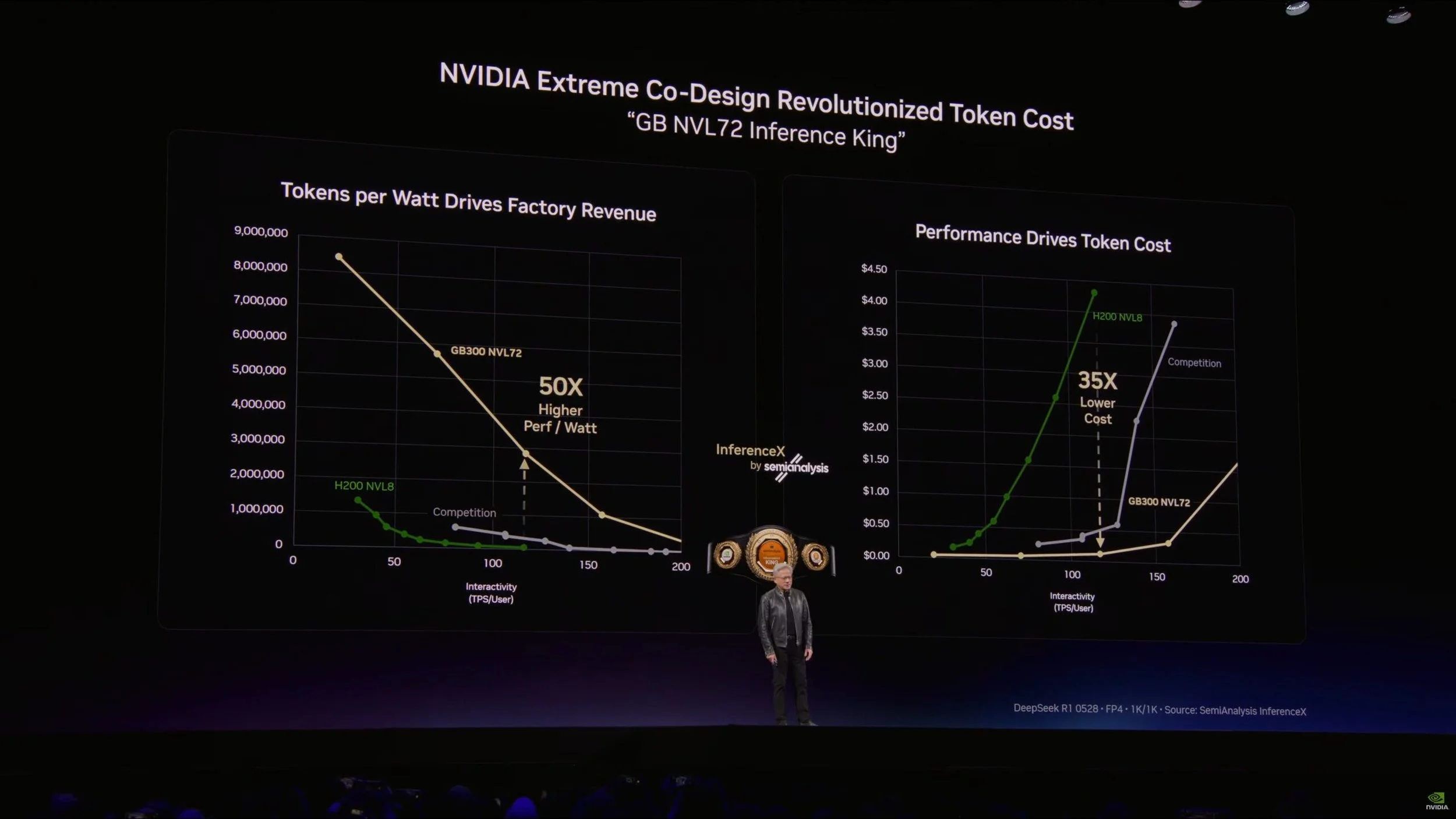

From Data Centers to AI Factories

The concept of the AI factory was one of the central themes of this year’s keynote.

Instead of traditional data center metrics, the economics of AI infrastructure are increasingly defined by:

power availability

tokens generated per watt

inference performance

system-level efficiency

Power—not silicon—is becoming the ultimate constraint.

That means AI clusters must be designed as highly optimized compute production systems, combining GPUs, networking, storage, cooling, and software into tightly integrated architectures.

For organizations building AI capabilities, this is where experienced infrastructure partners become critical.

Designing and deploying these environments requires expertise across AI, HPC infrastructure, and large-scale system integration.

NVIDIA GTC 2026 Keynote Extreme Co-Design Revolutionized Token Cost

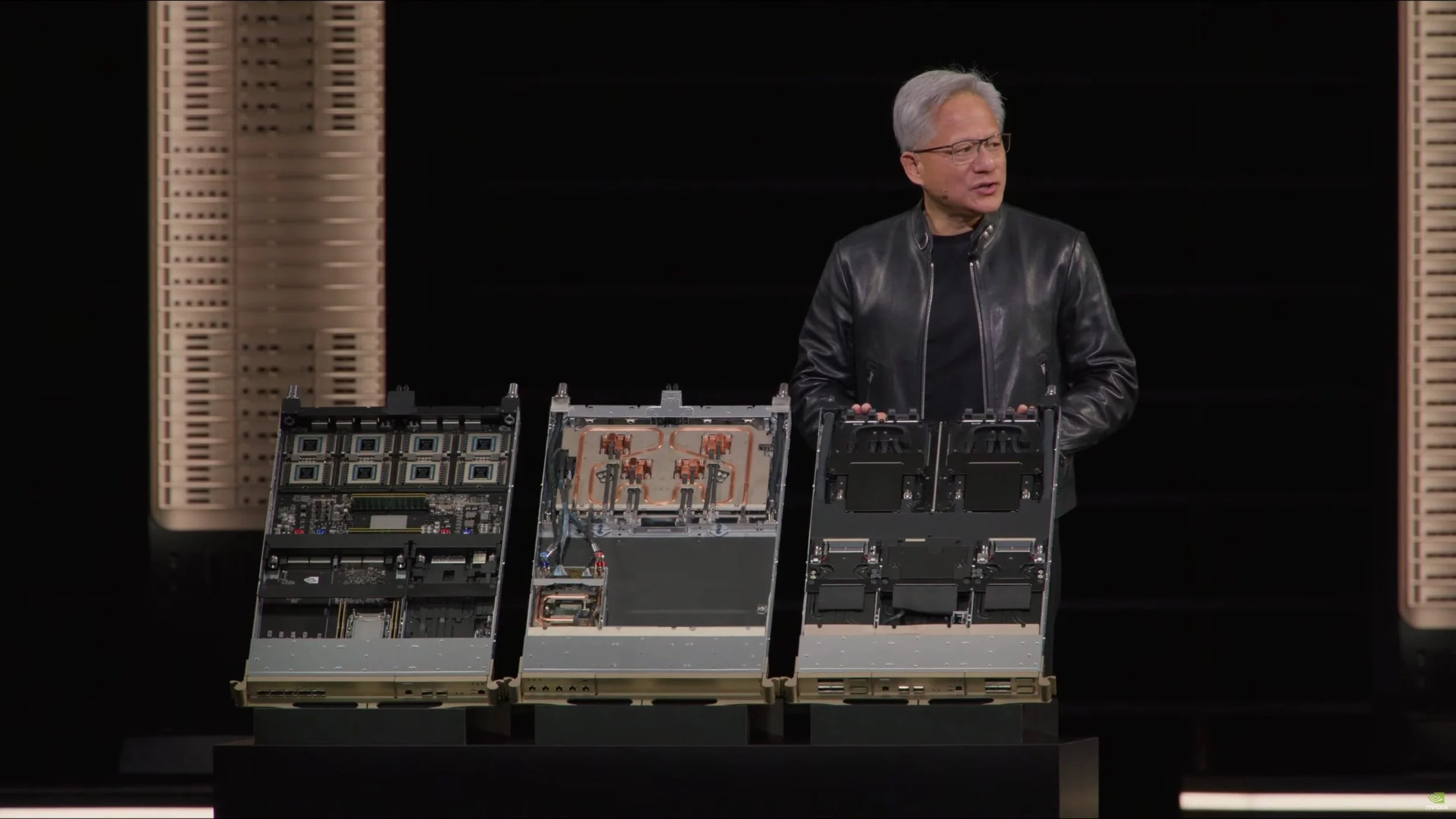

Vera Rubin: NVIDIA’s Next-Generation AI Platform

The largest hardware announcement at GTC was Vera Rubin, NVIDIA’s next-generation computing platform succeeding the Blackwell architecture.

Rather than introducing a single chip, NVIDIA revealed an entire vertically integrated AI computing platform.

The Vera Rubin platform includes:

Vera CPU

Rubin GPUs

BlueField-4 DPUs

Spectrum networking

AI-optimized storage architecture

rack-scale AI systems

These systems are designed to support every phase of the AI lifecycle—from training to large-scale inference to agentic workloads.

For organizations building AI clusters, this shift toward integrated rack-scale systems represents a significant evolution in infrastructure design.

NVIDIA GTC 2026 Keynote Vera Rubin Node

Rack-Scale Systems Become the New Unit of Compute

One of the most important architectural shifts highlighted in the keynote is that the rack—not the GPU—is becoming the fundamental unit of compute.

Modern AI infrastructure increasingly consists of:

liquid-cooled rack-scale GPU systems

high-bandwidth NVLink interconnects

integrated networking fabrics

high-performance storage architectures

orchestration CPUs and DPUs

Organizations are no longer simply purchasing GPU servers.

They are building AI factories designed for continuous AI production.

NVIDIA GTC 2026 Keynote Vera Rubin NVL72

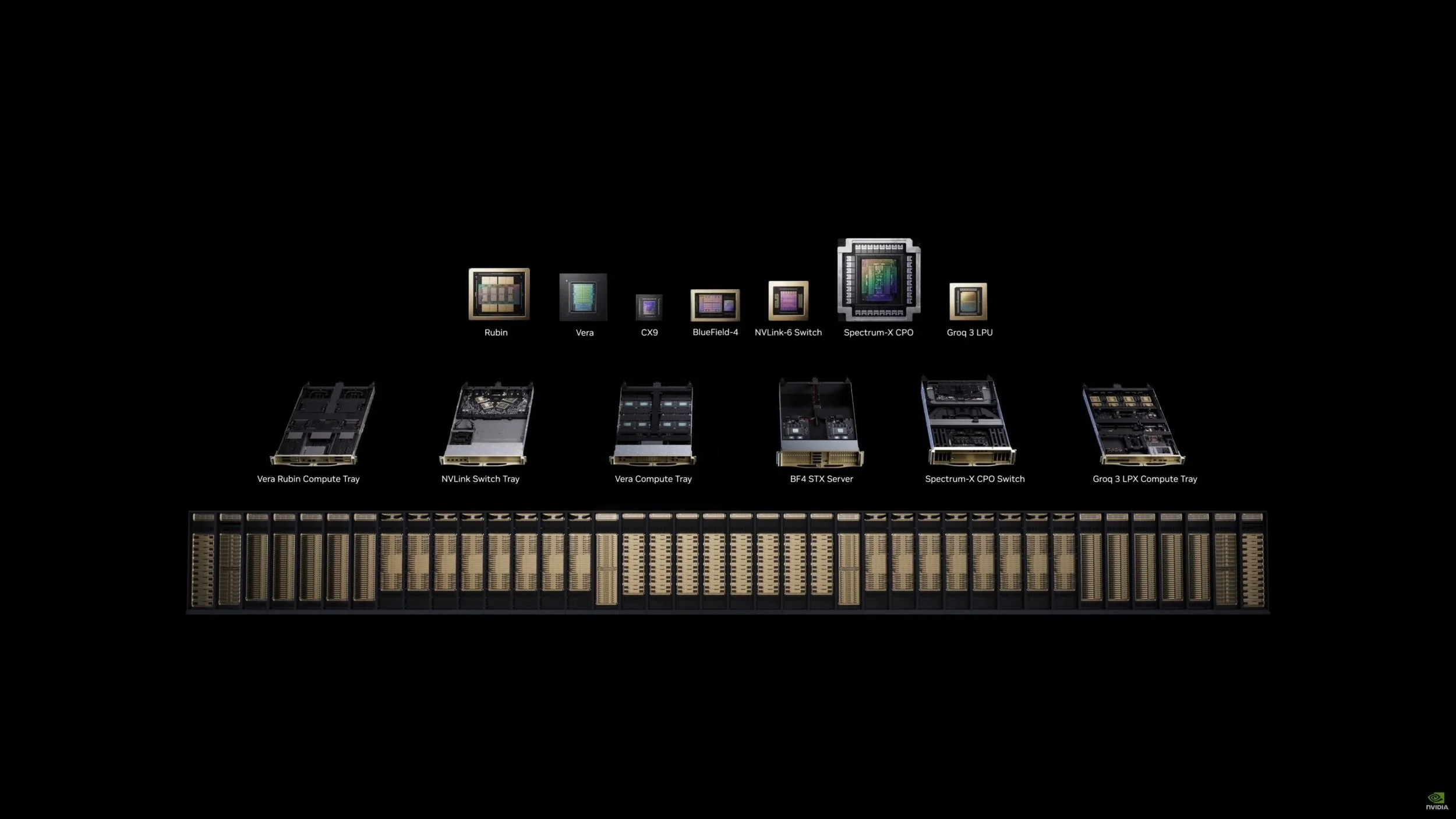

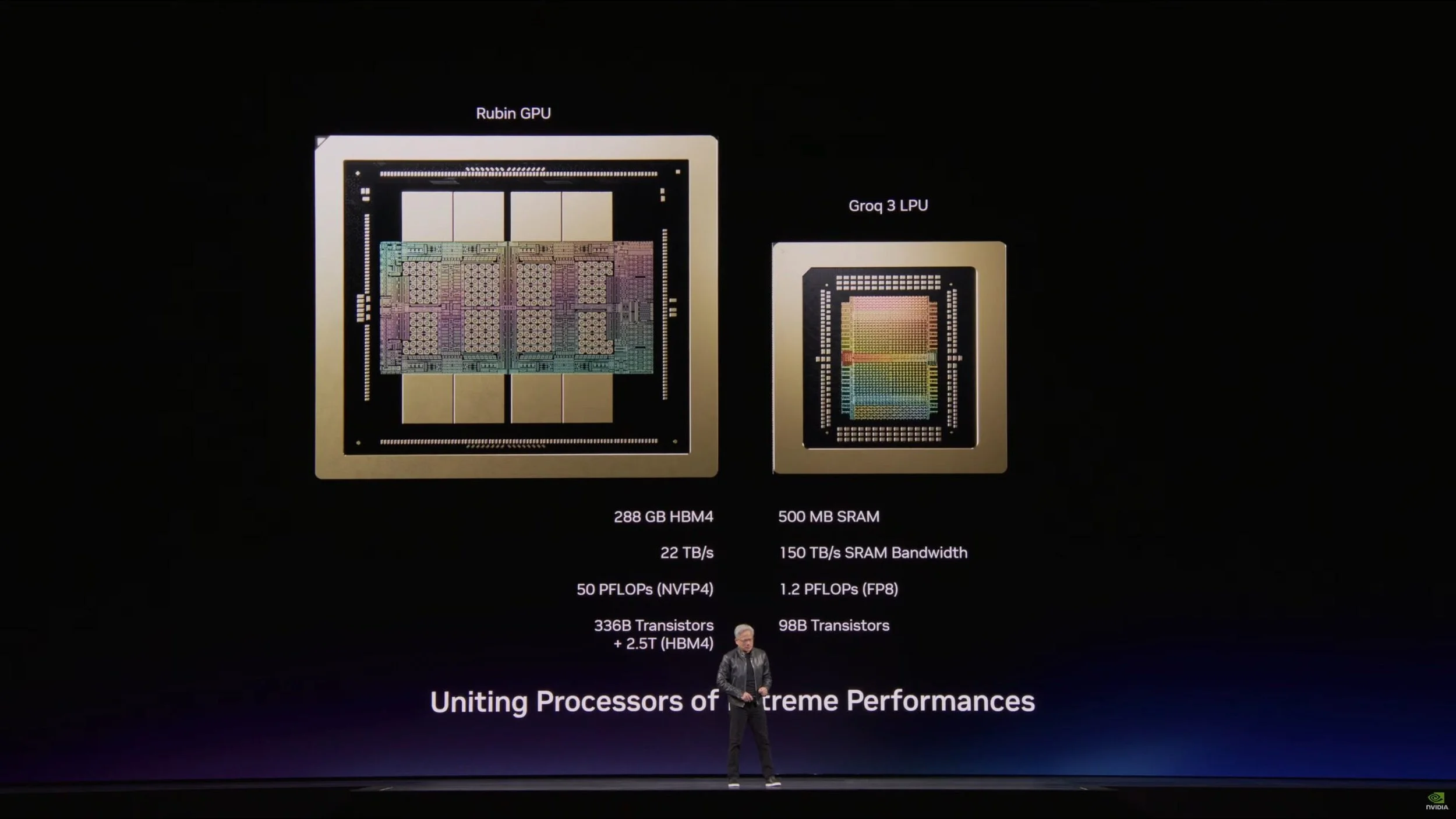

Heterogeneous Compute: GPUs + LPUs

Another interesting development is NVIDIA’s integration of Groq LPUs alongside Rubin GPUs.

Groq processors are optimized specifically for ultra-fast token generation and low-latency inference.

This enables a disaggregated inference architecture where:

Rubin GPUs handle large-scale compute workloads

Groq LPUs accelerate token generation and latency-sensitive workloads

This hybrid approach reflects a broader trend: future AI clusters will increasingly combine multiple compute architectures optimized for specific AI tasks.

NVIDIA GTC 2026 Keynote Groq LPU 2

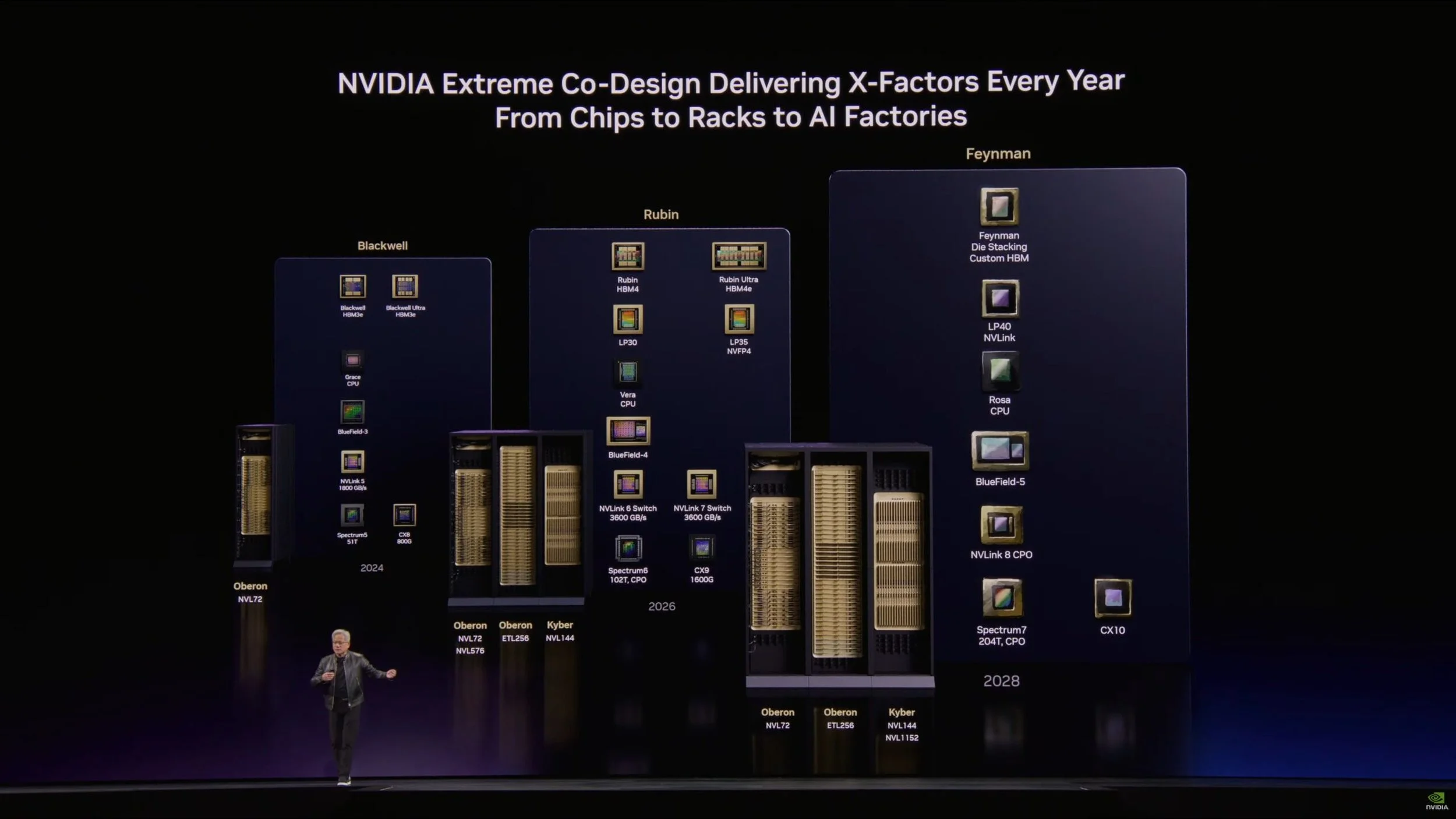

The Roadmap Beyond Rubin: Feynman and Rosa

Looking further ahead, NVIDIA also revealed the next architecture generation: Feynman.

This platform introduces several new technologies, including:

a new CPU architecture called Rosa

next-generation LPUs

BlueField-5 DPUs

CX10 networking

Kyber scale-up fabrics

optical networking technologies

Together these innovations advance every major pillar of AI infrastructure:

compute

networking

memory

storage

security

For organizations planning long-term AI infrastructure investments, NVIDIA’s roadmap highlights how quickly the ecosystem is evolving.

NVIDIA GTC 2026 Keynote NVIDIA Roadmap

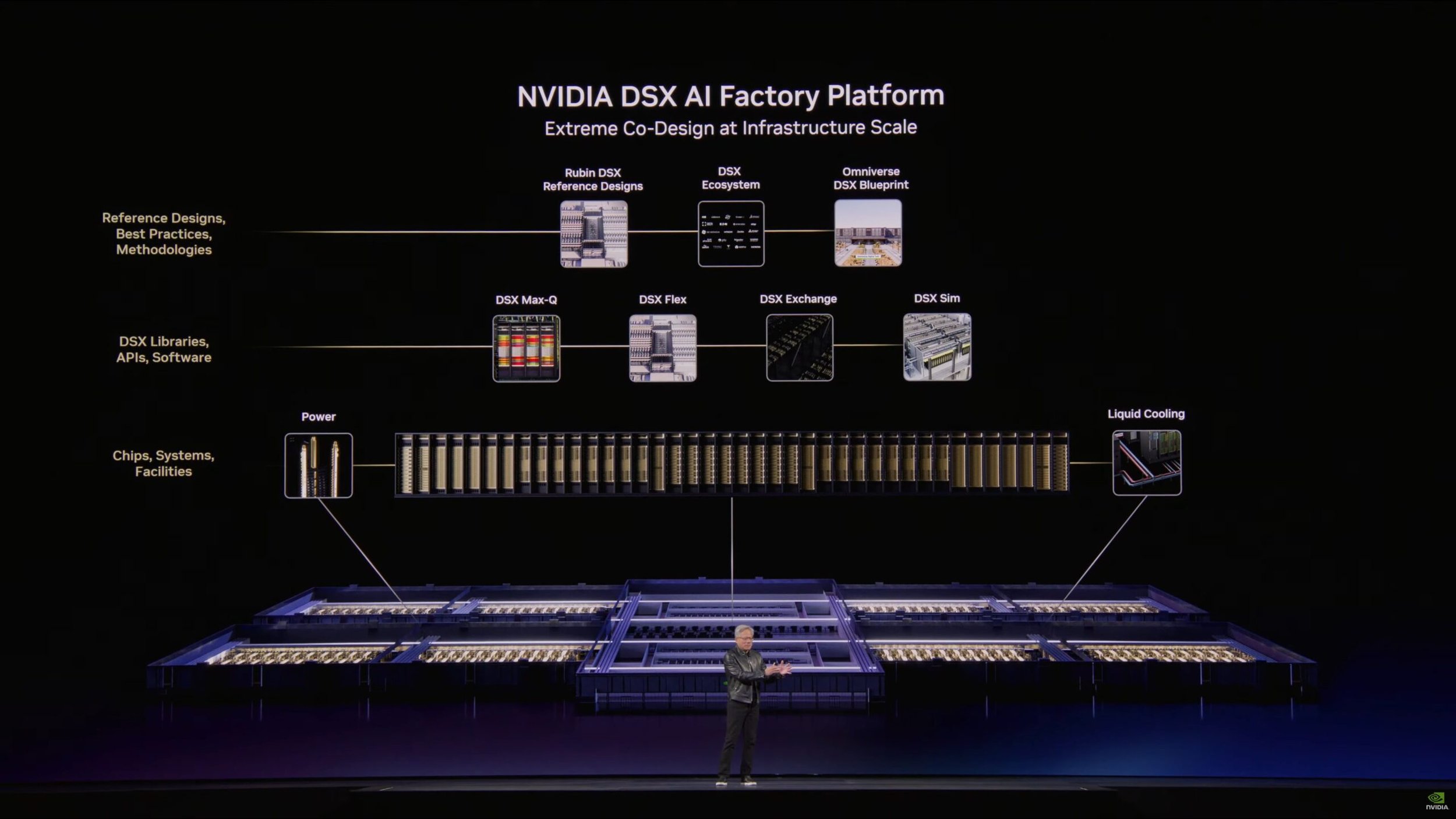

Designing AI Factories Before They Exist

Another major announcement was NVIDIA DSX, a digital twin platform for designing AI factories.

Using NVIDIA Omniverse, organizations can simulate entire AI data centers before building them.

This includes modeling:

cooling systems

electrical infrastructure

rack layouts

networking topology

operational performance

The goal is simple: maximize tokens per watt, the core productivity metric of AI infrastructure.

NVIDIA GTC 2026 Keynote DSX AI Factory Platform

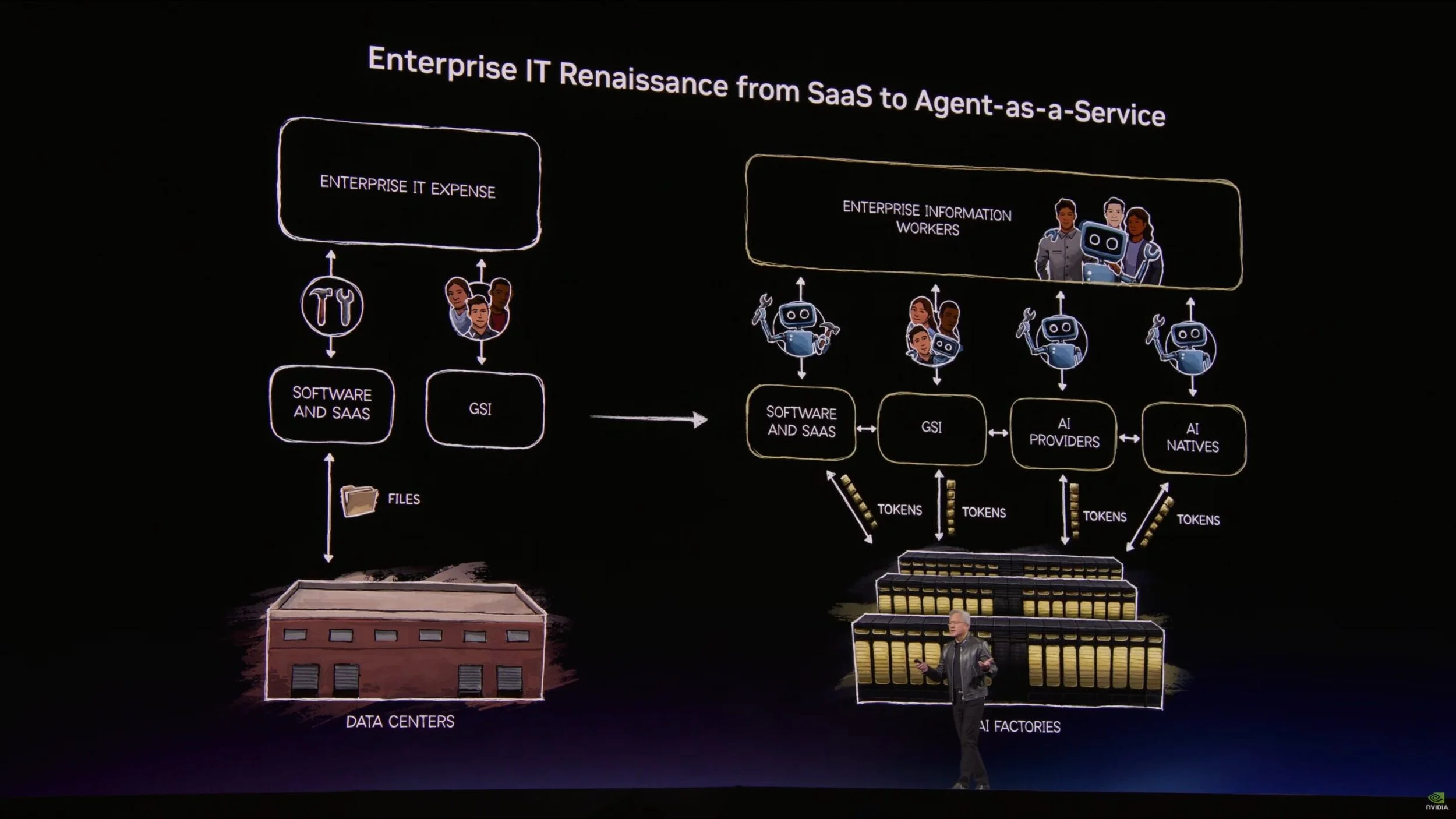

The Rise of Agentic AI

Beyond hardware, NVIDIA introduced several initiatives around agentic AI systems, including support for the open-source project OpenClaw.

OpenClaw functions as an operating system for AI agents, allowing them to:

reason

plan

access tools

execute workflows

NVIDIA also introduced the NeMoClaw enterprise stack, which adds security, policy enforcement, and enterprise governance to agentic AI deployments.

These systems will significantly increase inference demand, further accelerating the need for large-scale AI infrastructure.

NVIDIA GTC 2026 Keynote Agents As A Service (AGaaS)

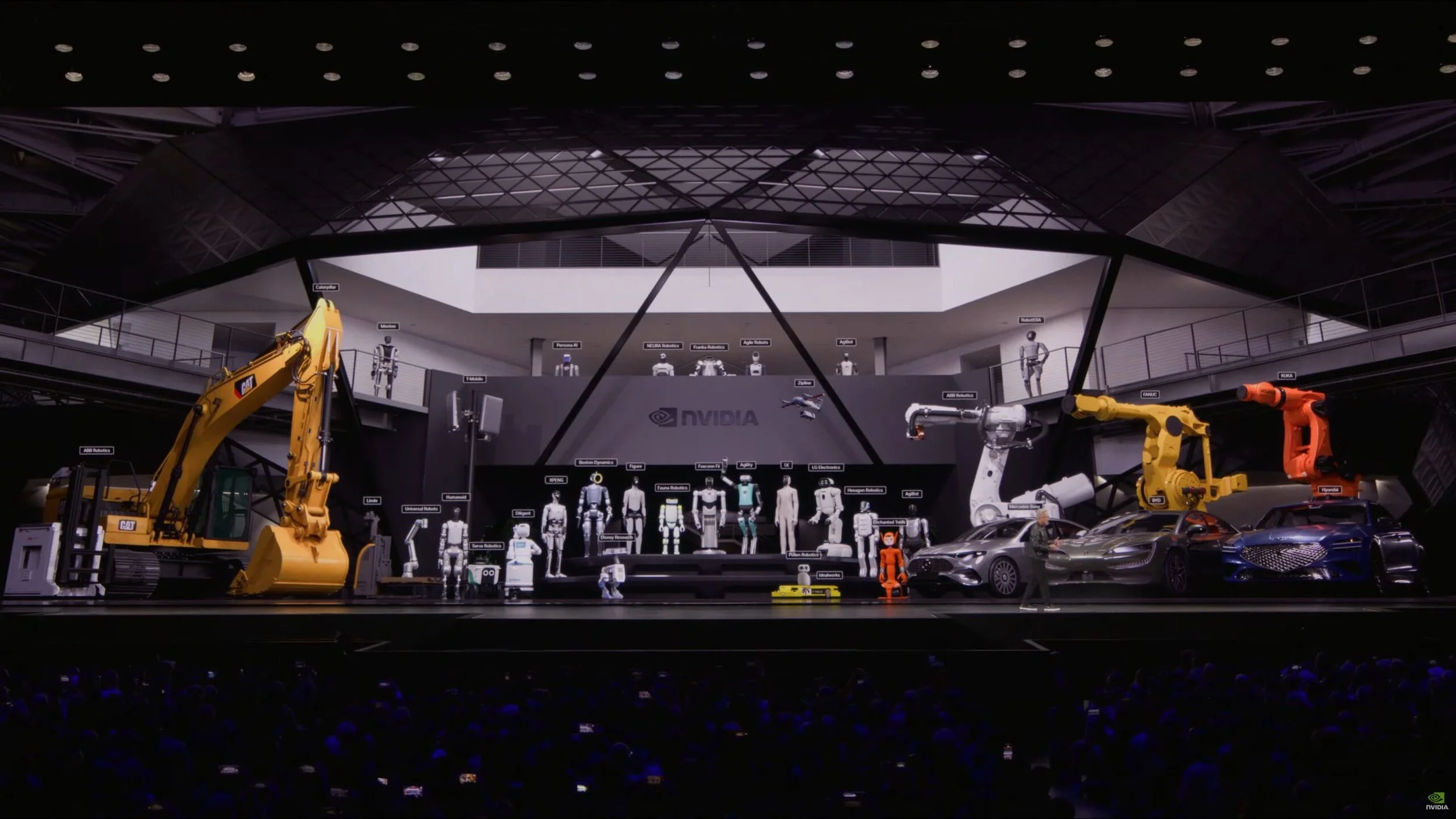

Physical AI and Robotics

The keynote also highlighted NVIDIA’s continued expansion into physical AI, where AI systems interact with the physical world through robots and autonomous systems.

Updates included:

expanded Isaac robotics simulation tools

Omniverse-based training environments

new automotive partnerships with BYD, Hyundai, Nissan, and Geely

collaboration with Uber for robotaxi deployment

These workloads will increasingly rely on both centralized AI factories and distributed edge AI infrastructure.

NVIDIA GTC 2026 Keynote Robotics

What This Means for the Canadian Market

For Canadian organizations across research, enterprise, and government sectors, the implications are significant.

AI infrastructure is rapidly becoming a strategic national capability.

Canada already plays an important role in the global AI ecosystem through its strong research institutions, startup ecosystem, and enterprise adoption of AI technologies.

However, building large-scale AI infrastructure requires more than GPUs.

It requires expertise across:

high-performance computing

AI software stacks

networking and storage architectures

power and cooling infrastructure

large-scale system integration

This is exactly where Images et Technologie operates.

For over 30 years, our team has helped Canadian organizations design, deploy, and operate high-performance computing environments.

Today, we are applying that expertise to help customers build AI clusters and AI infrastructure based on NVIDIA platforms.

Building AI Infrastructure in Canada?

Images et Technologie helps organizations design and deploy NVIDIA-based GPU clusters and AI infrastructure.

➡ Speak with an AI infrastructure specialist

The Role of Infrastructure Partners

As NVIDIA continues to push the boundaries of AI infrastructure, the role of local system integrators becomes even more important.

Hyperscalers may build their own AI factories, but many organizations—including enterprises, research institutions, and sovereign AI initiatives—require trusted partners to design and deploy these systems.

At Images et Technologie, our role is to help Canadian organizations:

architect AI clusters optimized for their workloads

deploy NVIDIA GPU infrastructure

integrate networking, storage, and AI software stacks

operate high-performance AI environments locally

As AI infrastructure becomes more complex, the ability to design and integrate these systems becomes just as important as the hardware itself.

Planning Your Next AI Infrastructure Project

GTC 2026 reinforced that AI infrastructure is becoming one of the defining technology platforms of our time.

With platforms like Vera Rubin and future architectures like Feynman, NVIDIA is building the foundation for the next generation of computing.

Across Canada, organizations are beginning to plan the infrastructure required to support this new era of AI—frContact Images et Technologie to discuss your next AI infrastructure project and how we can help design and deploy the right solution for your organization.om enterprise AI platforms to sovereign AI initiatives and research clusters.

For more than 30 years, Images et Technologie has helped Canadian organizations design and deploy high-performance computing infrastructure.

Today, we are helping customers build the AI factories and GPU clusters that will power the next generation of innovation.

If your’re exploring:

deploying NVIDIA GPU clusters

scaling AI inference infrastructure

building on-prem or hybrid AI environments

designing high-performance storage and networking for AI workloads

Let’s talk about your next AI infrastructure project.